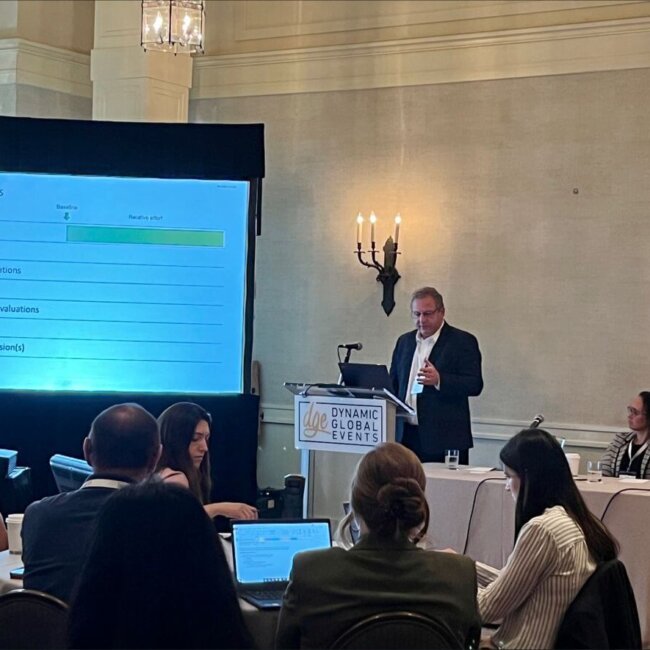

“The conference was very well organized, the venue was nice, the speaker topics had a good variety and were all well put together.” - Senior Human Factors Engineer, OUTSET MEDICAL

“Very informative and relevant to our work.” – Device Engineer, TEVA

As regulatory expectations for medical devices, combination products, and IFUs are constantly being updated, it is more essential than ever for human factors and usability professionals to be able to rely on cross-functional teams. But are you fully prepared to get the best feedback from colleagues who got their design experience from other sectors, such as aviation, automotive, or military? And have you fully kept up with the psychological understandings required to learn the most from your user groups?

DGE invites you to its 5th Human Factors Engineering & Usability Studies Congress – the industry’s most trusted and in-depth meeting on the technical, regulatory, and teamwork skills that your product pipeline needs! This year’s all-new agenda features detailed insights on:

- Full Pipeline Review to Determine where HFE Intervention is Needed

- Planning your Adoption of Artificial Intelligence and LLMs

- Leveraging Data to Avoid Redundant Testing

- Applying Non-Traditional Data and Tools as Value-Drivers

- Determining Best Practice for Moderation in Validation Testing

- Improving your Outreach to IRBs

…and much more! We look forward to seeing you in Philadelphia this October 23-24

Professionals from pharmaceutical, biotechnology and medical device companies working in:

- User Experience / User Interface / UX / UI

- Engineering / Mechanical Engineering

- Device Design

- Regulatory Affairs

- Risk / Risk Management

- R&D / R&D Engineer

- Validation

- Mobility

- Medical Device

- Combination Products / Combo Products

- Product Development / New Product Development

- Industrial Design

- Handheld

- Pharmaceutical Development Operations

- Customer Experience

- Packaging

- Human Factors / Human Factors Engineer

- Device Development / Device Technology

- Device Development

- Design Controls

- Wearable / Wearables

- Technology / CTO

- Engineering / Device Engineering / Clinical Engineering

- Labeling

- Usability

- Design Assurance Engineer

- Device Technology

- Quality / Product Quality

- Patient Experience

- Technical Support

- Architect / Design Architect / Solutions Architect

- Instrumentation

Had a great time at the 4th Human Factors Engineering and Usability Studies Congress in Philadelphia this week. Thank you to Dynamic Global Events (DGE) for the invitation and organizing this event, and to all the speakers and attendees for sharing their knowledge and participating in great discussions on medical device human factors!

Thank you DGE for inviting me to speak about correcting for the subjective bias that sometimes occurs when observing short-lived or self-corrected errors. I’m always delighted to converse with other Human Factors experts regarding best practices in designing a product that ensures ease of use and safety for the end user. Key takeaway for me: A just culture encourages self-reporting of errors and near-misses, which ultimately leads to better designs.

Maximize your brand's impact and visibility by becoming a valued sponsor. Shape the future of life sciences, connect with other industry leaders, and showcase your commitment to innovation. Elevate your presence - sponsor this conference and be at the forefront of advancements in science and technology.

Hit your networking target. Connect with the right professionals to amplify your event connections

Discover networking opportunities to interact, engage, and build valuable connections

After the event, all approved presentations are made available exclusively to our valued attendees.*Conditions may apply*

Immerse yourself in a dynamic, vibrant conference experience while unlocking new opportunities with life sciences' key decision makers

Influence conference content and conversation! Submit your question below.

Stay up to date on the latest announcements